My background spans data science, music technology, electrical engineering, and acoustics. In the past few years I have been researching and building systems for making, performing, and understanding music.

Real-time Fractional Fourier Transform (FRFT)

Real-time Fractional Fourier Transform (FRFT) implementation for audio processing and synthesis, designed for Max and Max for Live

Installation: Follow the installation guide on the GitHub Releases page to set up the Max external and patches.

Developed by Esteban Gutiérrez and me

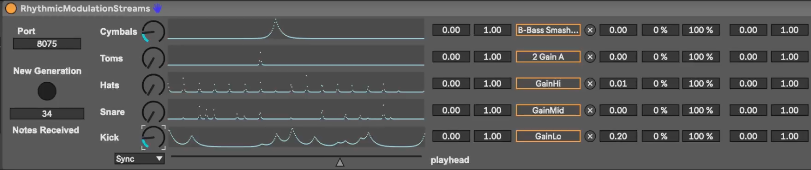

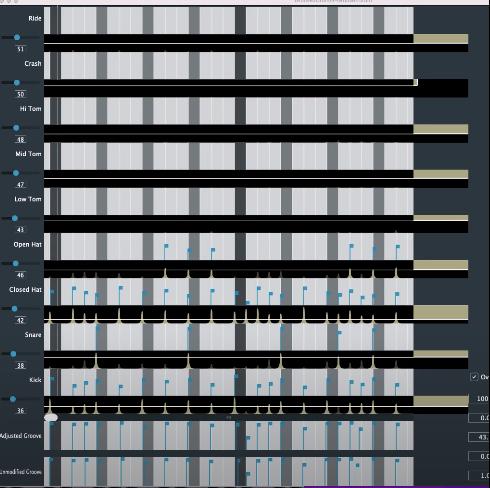

MultiStream Rhythmic Modulations

A Max4Live patch that generates multiple parallel modulation curves that are rhythmically interlocked.

This patch requires the GrooveTransformer VST to be installed.

Once downloaded, unzip the file and move the entire folder to your Max X/Packages folder or add it to your Max search path.

Developed by Nicholas Evans and me.

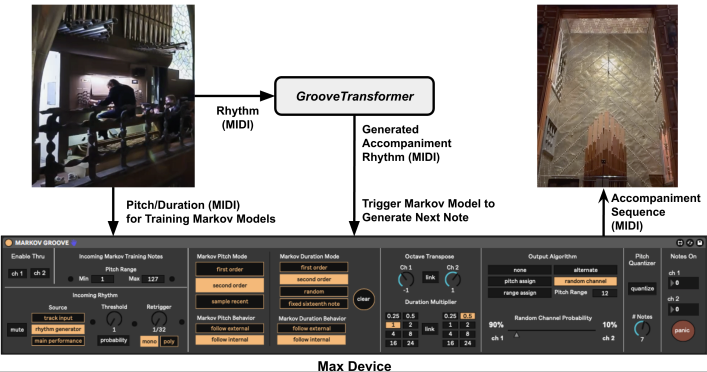

Markov Accompaniment Patch

A Max4Live patch that generates accompaniment tracks using Markov Chains.

Installation: Unzip the downloaded file and place the Markov_Groove_Device.maxpat file in your Max X/Packages folder.

To drive the Markov Accompaniment patch, you will need a rhythmic source. It was originally developed with the GrooveTransformer VST, but any MIDI rhythmic source works.

Developed by Nicholas Evans and me.

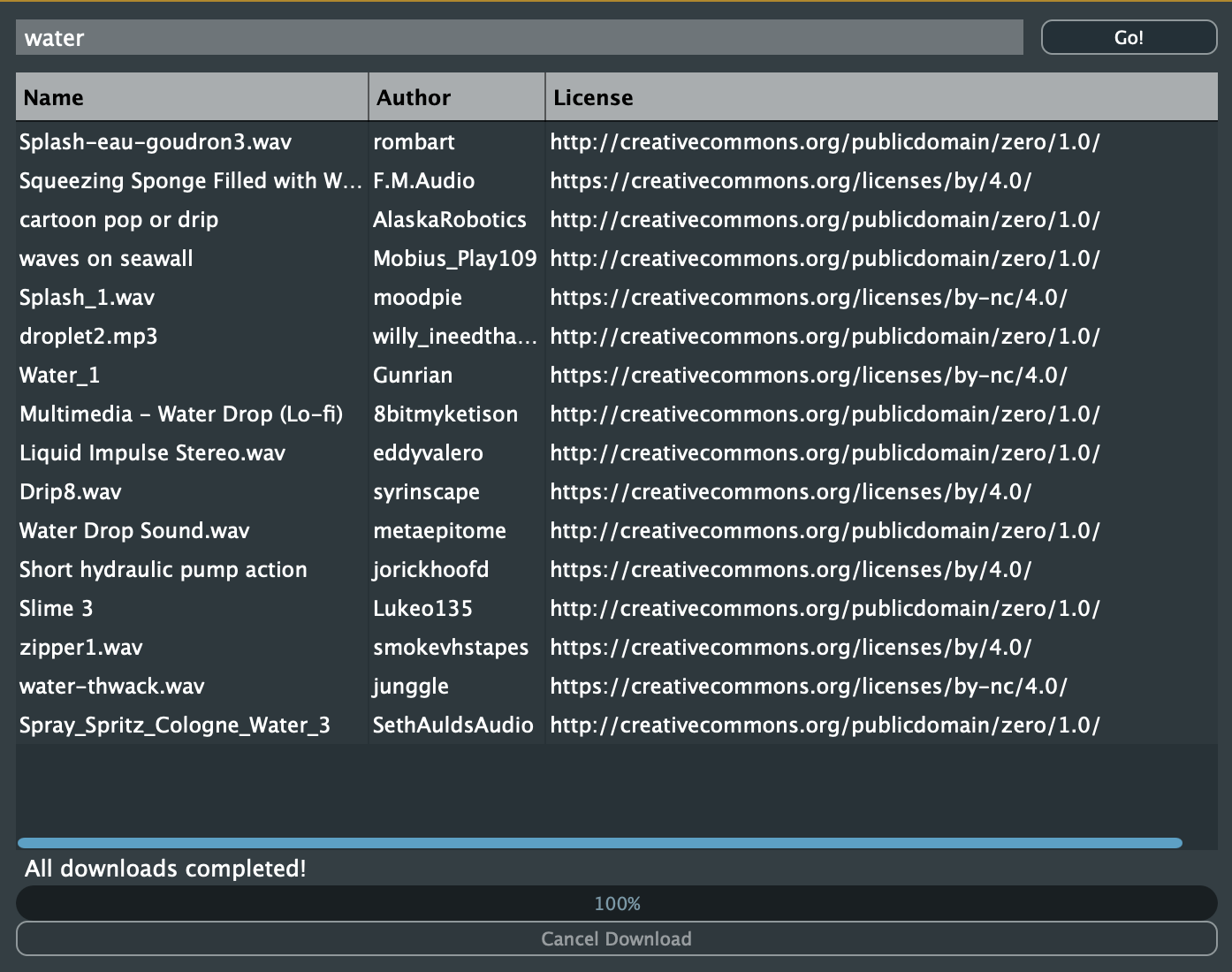

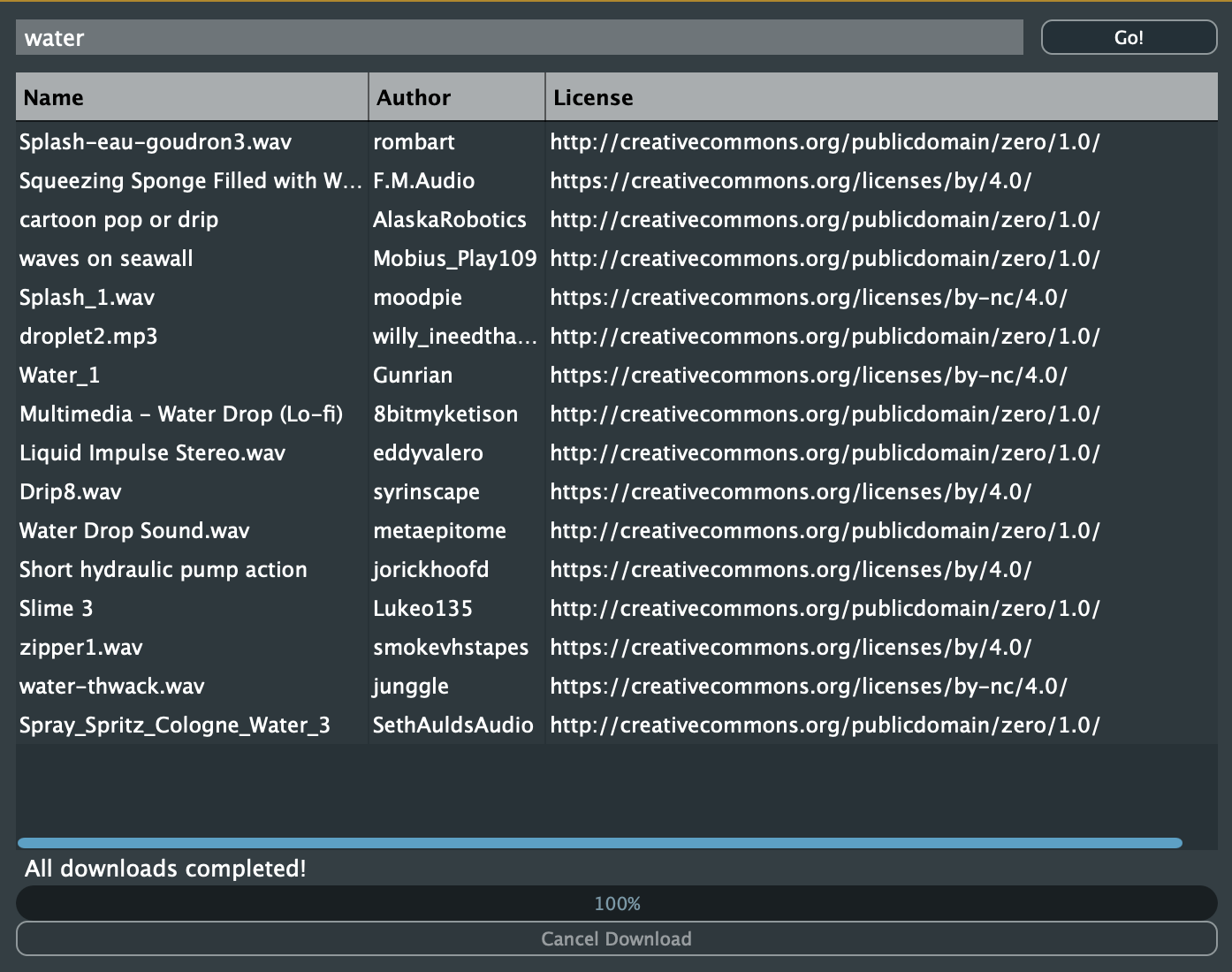

Freesound Rack Plugin

Access the Freesound database directly from your DAW

After installation, the plugin will appear in your DAW under MusicTechnologyGroup > FreesoundRack.

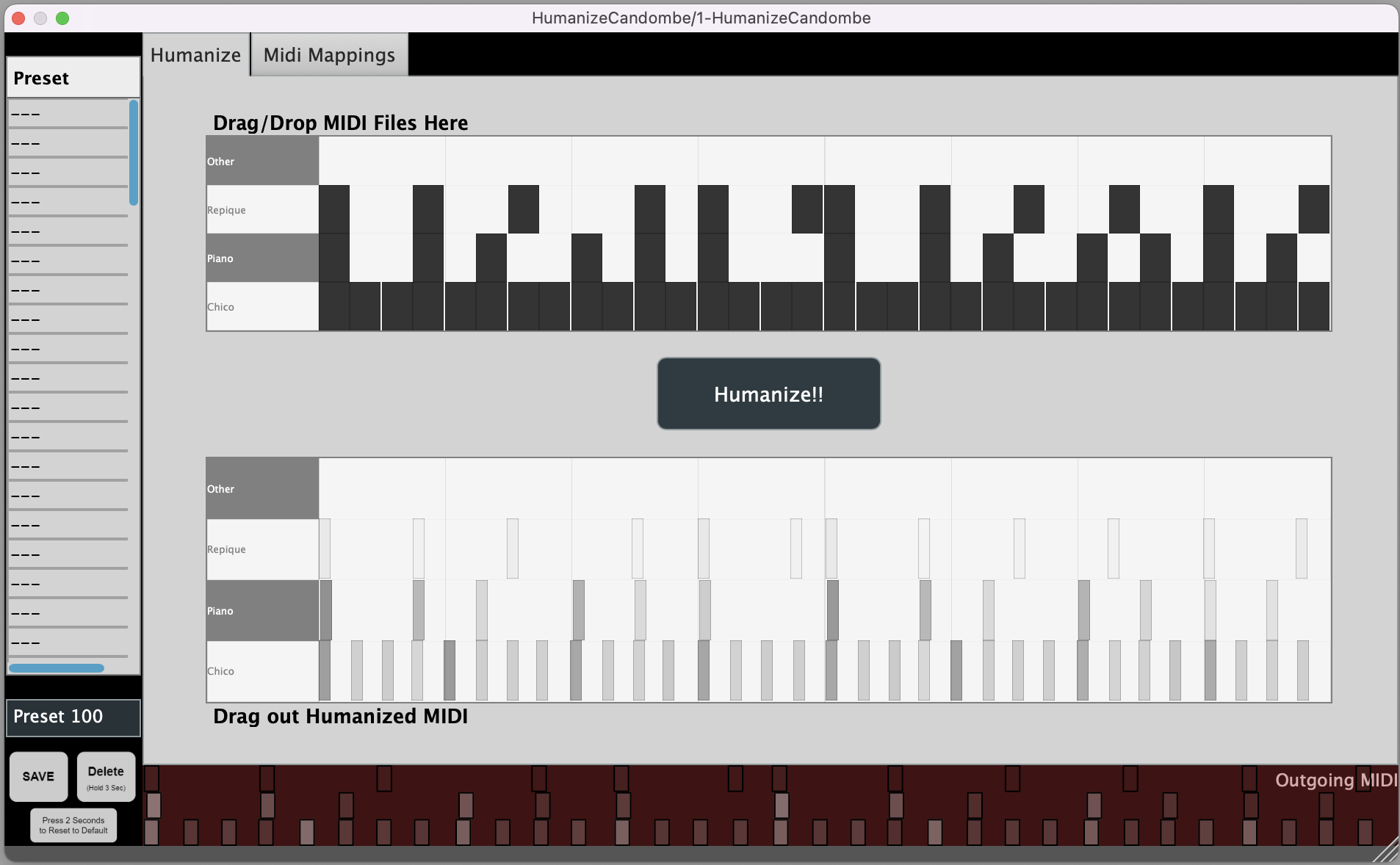

HumanizeCandombe VST

Adds velocity and micro-timing to quantized MIDI sequences

After installation, the plugin will appear in your DAW under MusicTechnologyGroup > HumanizeCandombe.

GrooveTransformer VST

A Performance-Oriented Generative rhythm-sequencer

After installation, the plugin will appear in your DAW under MusicTechnologyGroup > GrooveTransformer.

Developed by Nicholas Evans and me.

Freesound Simple Sampler

An updated version of Ramires and Font's Freesound Simple Sampler, adapted for V2 of the Freesound API

The plugin source code is in the ./Plugins/FreesoundSimpleSampler directory of the repository.

After installation, the plugin will appear in your DAW under MusicTechnologyGroup > FreesoundSimpleSampler.

This plugin uses a JUCE wrapper for Freesound API V2, adapted from the original V1 implementation by António Ramires (see V1 source link above) to work with the latest Freesound API.

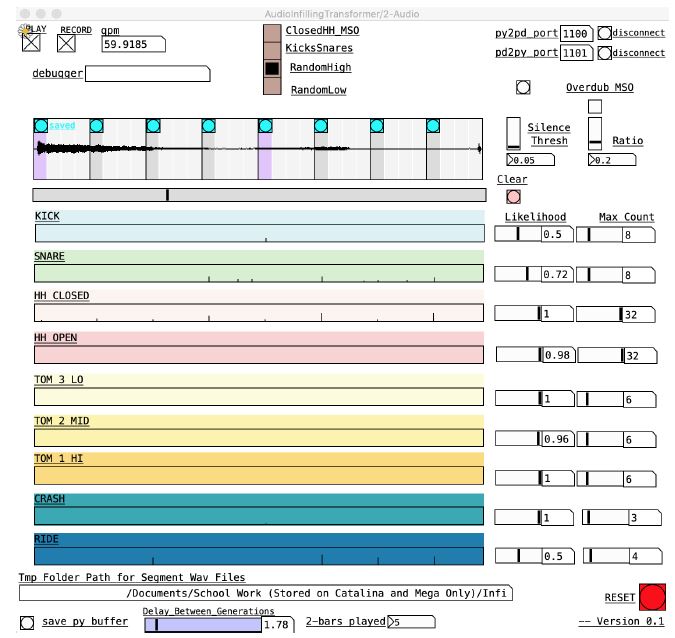

Groove2Drum Plugin

A VST3 plugin for generating MIDI drum accompaniments in real-time

Packaged releases coming soon — check the repository for updates.

GrooveTransformer Eurorack

A Generative Control Voltage Sequencer

A software version of the module is available via the link above.

Developed by Nicholas Evans and me.

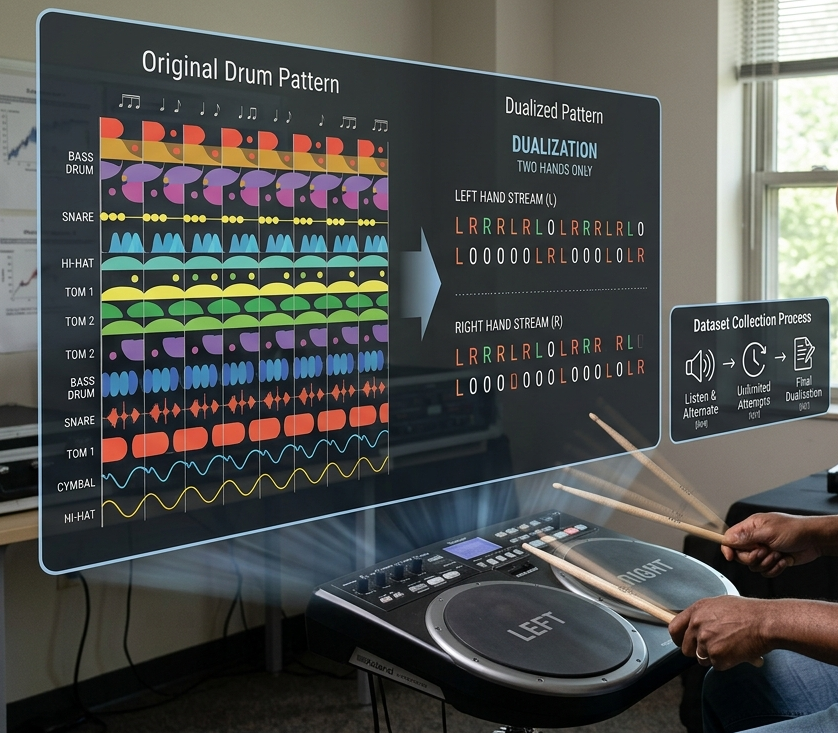

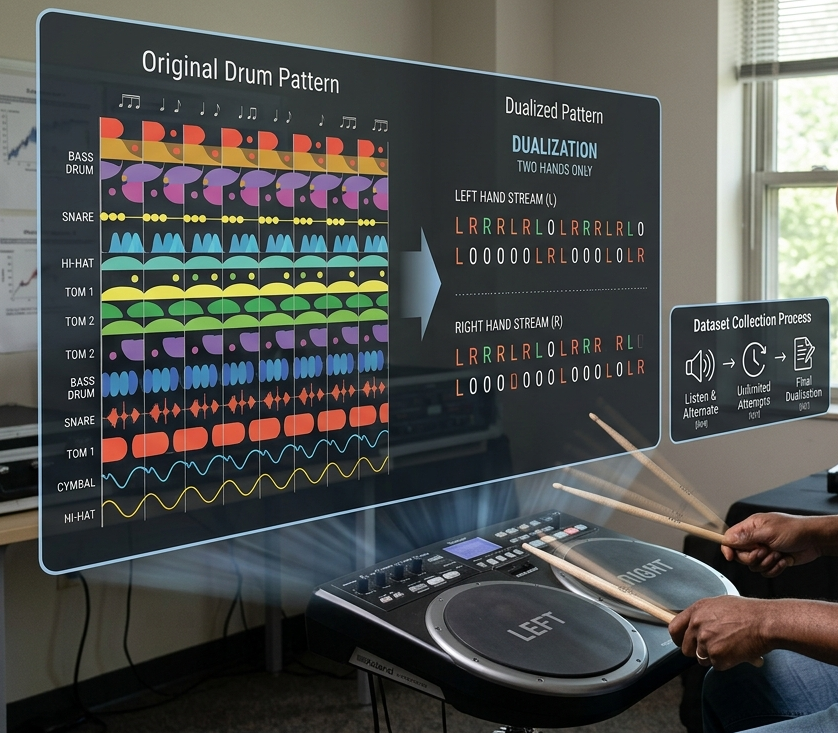

TapTamDrum

A dataset of drummer-performed two-hand reductions of complex drum patterns

The dataset is freely available and fully documented at taptamdrum.github.io.

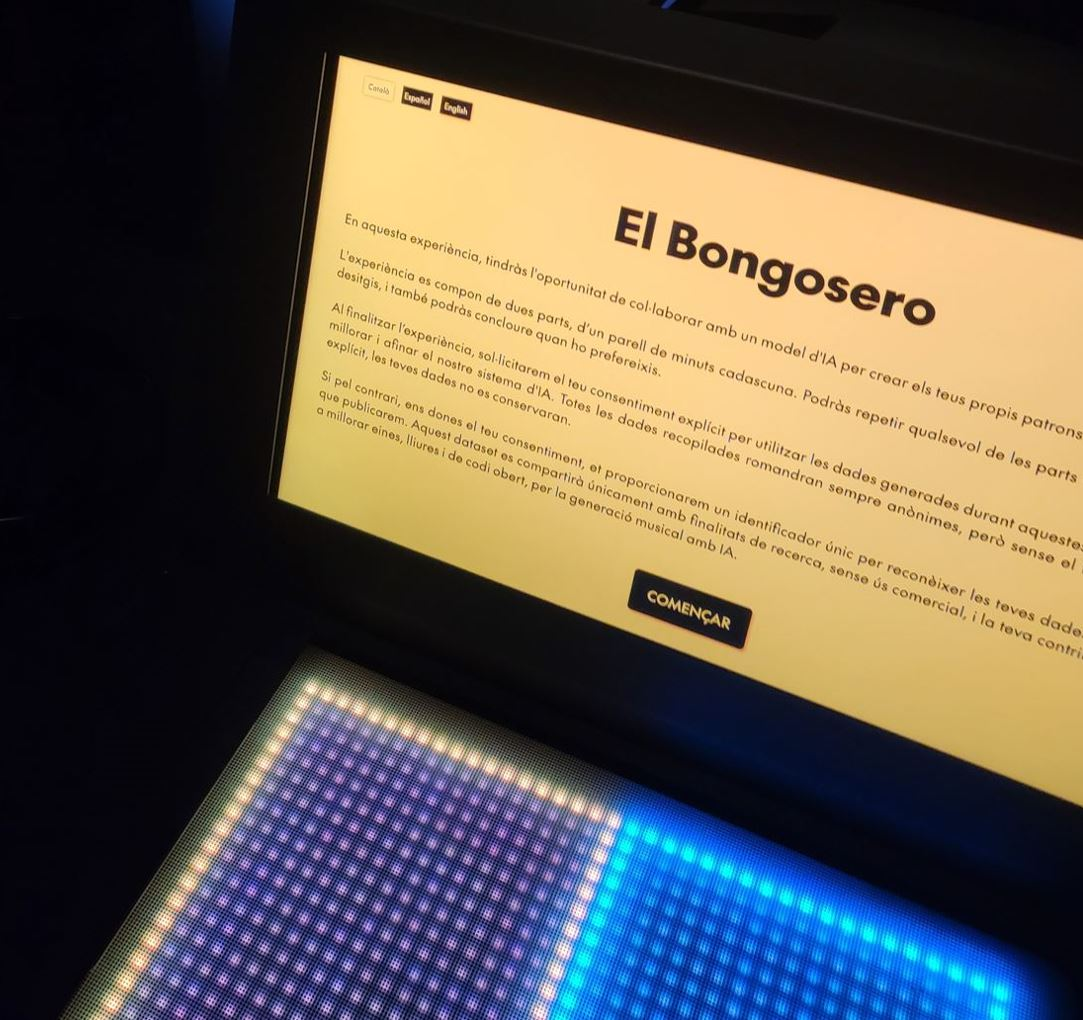

El Bongosero

A Dataset of Hand-Played Bongo Grooves Accompanying Drum Patterns

Developed by Nicholas Evans and me.

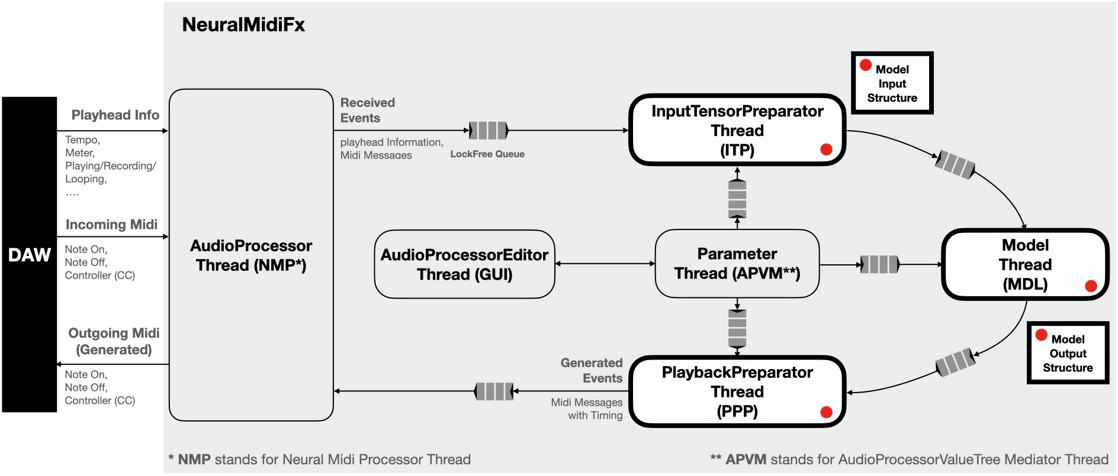

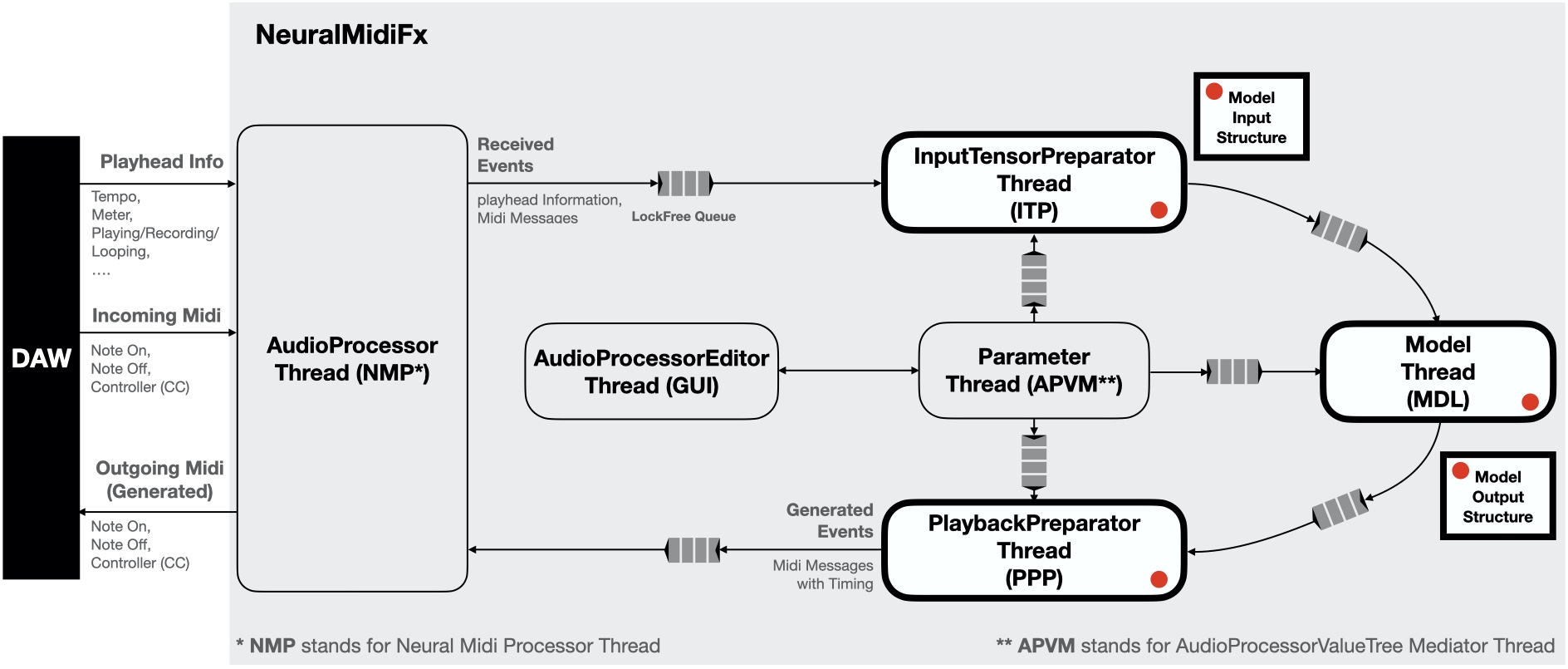

NeuralMidiFx

A Wrapper Template for Deploying Neural Networks as VST3 Plugins

The wrapper is open-source and freely available on GitHub.

FRFT in the Browser

WebAssembly ports of the Fractional Fourier Transform with a collection of ready-to-embed interactive browser widgets.

The source code can be found in the /web directory of the repository linked above.

These widgets have been developed by Behzad Haki and Esteban Gutiérrez

Synthesis Using Fractional Fourier Transform (FRFT)

A tutorial on the 'Fractional Fourier Transform Sound Synthesis' paper by Gutiérrez et. al.

This tutorial was prepared by Esteban Gutiérrez and me. The content is based on the work done by Esteban and his colleagues in the paper “Fourier Transform Sound Synthesis” published in 2025.

Freesound API V2

Developing audio applications and plugins with JUCE and Freesound API V2

The tutorial code is in the Plugins/FreesoundSimpleSampler/Source directory of the repository.

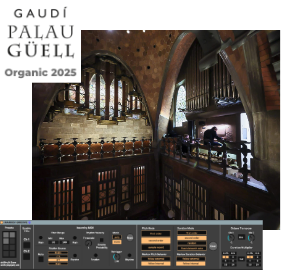

Palau Güell Live Show — Nov 2024

A generative organ performance at Palau Güell in Barcelona

CCCB Live Show — Feb 2024

A public showcase of the GrooveTransformer system at the Centre de Cultura Contemporània de Barcelona.

CCCB: Artificial Intelligence Exhibition

A six-month interactive installation at the Centre de Cultura Contemporània de Barcelona in which over 3,000 members of the public engaged with a generative sonic system.

Sónar+D Music Festival 2023

Showcased the GrooveTransformer Eurorack module to the general public at the Sónar+D festival in Barcelona.

+RAIN Film Festival 2023

A live performance involving the GrooveTransformer Eurorack module at the +RAIN Film Festival at UPF, Barcelona.

I’ve been working on a new fun side project called FreesoundRack. It’s a sampler plugin that allows you to easily load and play sounds from Freesound.org. The idea is to be able to quickly curate racks of sounds from Freesound and use them in your music production workflow.

The plugin is still in early development, but for those interested, an early version is available here:

We just presented the following paper at AIMC 2025.

Exploring Situated Stabilities of a Rhythm Generation System Through Variational Cross-Examination

Błażej Kotowski*, Nicholas Evans*, Behzad Haki, and 2 more* Equal contribution

Proceedings of the 6th Conference on AI Music Creativity (AIMC) · 2025Abstract

This paper investigates GrooveTransformer, a real-time rhythm generation system, through the postphenomenological framework of Variational Cross-Examination (VCE). By reflecting on its deployment across three distinct artistic contexts, we identify three stabilities: an autonomous drum accompaniment generator, a rhythmic control voltage sequencer in Eurorack format, and a rhythm driver for a harmonic accompaniment system. The versatility of its applications was not an explicit goal from the outset of the project. Thus, we ask: how did this multistability emerge? Through VCE, we identify three key contributors to its emergence: the affordances of system invariants, the interdisciplinary collaboration, and the situated nature of its development. We conclude by reflecting on the viability of VCE as a descriptive and analytical method for Digital Musical Instrument (DMI) design, emphasizing its value in uncovering how technologies mediate, co-shape, and are co-shaped by users and contexts.

BibTeX

@inproceedings{kotowski_2025_16946740, author = {Kotowski, Błażej and Evans, Nicholas and Haki, Behzad and Font, Frederic and Jordà, Sergi}, title = {{Exploring Situated Stabilities of a Rhythm Generation System Through Variational Cross-Examination}}, month = sep, year = {2025}, doi = {10.5281/zenodo.16946740}, url = {https://doi.org/10.5281/zenodo.16946740}, address = {Brussels, Belgium}, booktitle = {{Proceedings of the 6th Conference on AI Music Creativity (AIMC)}}, }

The following paper was just presented at ICMC 2025:

Learning Microrhythm in Uruguayan Candombe using Transformers

Anmol Mishra, Satyajeet Prabhu, Behzad Haki, and 1 moreProceedings of the International Computer Music Conference (ICMC) · 2025Abstract

Musicians rely on nuanced microrhythm, slight variations in timing, dynamics, and other aspects, to create an expressive rhythmic feel in music performance. Electronic music production often attempts to replicate these qualities through algorithmic manipulations to achieve similar effects. In this work, we address the generation of microrhythm using a method that learns microtiming and dynamics from onset timing and strength annotations of drum performances. We frame microrhythm learning as a sequence modeling task, leveraging a Transformer-based model. Our focus is on Uruguayan candombe drumming, where we explore its rhythmic patterns at both the beat and rhythmic cycle levels. To evaluate the model’s effectiveness in replicating the original microrhythm, we compare the mean, standard deviation, and histogram intersection of timing deviations and dynamics values at each subdivision for the original and the generated data. The model is deployed as a VST enabling artists to incorporate candombe grooves into drum scores. With this work, we aim to bridge the gap between algorithmic rhythm creation and the expressive qualities of live performance, striving to produce music with the authentic grooves of various Latin American genres.

BibTeX

@inproceedings{mishra2025learning, title = {{Learning Microrhythm in Uruguayan Candombe using Transformers}}, author = {Mishra, Anmol and Prabhu, Satyajeet and Haki, Behzad and Rocamora, Mart{\'\i}n}, year = {2025}, month = jun, booktitle = {{Proceedings of the International Computer Music Conference (ICMC)}}, address = {Boston, Massachusetts}, }

The following paper was just presented at NIME 2025:

Repurposing a Rhythm Accompaniment System for Pipe Organ Performance

Nicholas Evans*, Behzad Haki*, Sergi Jordà* Equal contribution

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) · 2025Abstract

This paper presents an overview of a human-machine collaborative musical performance by Raül Refree utilizing multiple MIDI-enabled pipe organs at Palau Güell, as part of the Organic concert series. Our earlier collaboration focused on live performances using drum generation systems, where generative models captured rhythmic transient structures while ignoring harmonic information. For the organ performance, we required a system capable of generating harmonic sequences in real-time, conditioned on Refree’s performance. Instead of developing a comprehensive state-of-the-art model, we integrated a more traditional generative method to convert our pitch-agnostic rhythmic patterns into harmonic sequences. This paper details the development process, the creative and technical considerations behind the final performance, and a reflection on the efficacy and adaptability of the chosen methodology.

BibTeX

@inproceedings{nime2025_16, address = {Canberra, Australia }, articleno = {16}, author = {Evans, Nicholas and Haki, Behzad and Jordà, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME)}, doi = {10.5281/zenodo.15698807}, issn = {2220-4806}, month = jun, numpages = {5}, pages = {116--120}, title = {{Repurposing a Rhythm Accompaniment System for Pipe Organ Performance}}, track = {Paper}, year = {2025}, }

I just successfully defended my PhD thesis, which is titled “Design, development, and deployment of real-time drum accompaniment systems”

You can find more info about my thesis here:

Design, Development, and Deployment of Real-Time Drum Accompaniment Systems

Behzad HakiPhD Thesis · Universitat Pompeu Fabra · 2024Abstract

This dissertation examines the generation of real-time symbolic drum accompaniments, with a particular focus on live improvisation contexts. While the research does occasionally focus on the audio domain, the majority of the research is centered on symbolic-to-symbolic systems. This dissertation addresses real-time drum accompaniment from multiple perspectives: (1) conceptual, where a target application is designed based on a set of specified requirements, (2) architectural, where specific generative models are designed and developed for the selected conceptual design, and (3) deployment, where the conceptual design is realized and evaluated. Throughout this work, three accompaniment systems were developed and refined. The first work, detailed in Chapters 3 and 4, was aimed to develop a lightweight system on which future more sophisticated designs could be based. This system was based on a transformer model that was developed to convert a monotonic (single voice) rhythmic loop (groove) into a full multi-voice drum loop. The concept explored here was to investigate whether a loopbased system could be effectively used for generating drum accompaniments in long evolving improvisational sessions. The resulting system was evaluated by professional musician Raül Refree, who provided valuable insights on how the design could be modified to better suit the task. Following these evaluations, the second system, GrooveTransformer, was developed (discussed in Chapter 5). In this work, rather than relying on our personal speculations, we collaborated with Refree from the outset of the project. As such, we were able to develop a system that was far more suitable for the task at hand, to the extent that the musician felt comfortable to perform with the system in a public live improvisational session. While still loop-based, the generative model in this work was based on a variational transformer that enabled us to address the majority of the collaborating musician’s requirements for the system. Although initially deployed as software, we also developed a hardware Eurorack version (discussed in Chapter 6). The Eurorack module was designed to encourage experimentation and exploration beyond the system’s original intent. In the third system (discussed in Chapter 7), we moved beyond the loop-based approach. The primary goal was to enhance the system’s awareness of the evolving performance over extended durations. To this end, we developed a new generative model with a much larger context. The larger model’s computational demands required a thorough exploration of both conceptual and technical deployment strategies. All of these systems focused on converting a monotonic groove into a multivoice drum pattern. In Chapter 8, we first discuss the limitations and affordances of basing the generations solely on groove. Additionally, several works and proposals surrounding this groove-to-drum approach are discussed in detail: (1) how to improve the process of extracting grooves from polyphonic sources, (2) how to make this approach more accommodating for individuals with varying levels of musical experience, (3) how to expand the concept to generate general rhythms rather than exclusively drums, and (4) how to extract groove from audio sources. Beyond the primary objectives, this research also yielded several significant secondary contributions that arose from the explorations conducted. One such achievement was that we were able to establish that our systems can also be adapted to work with audios without major architectural changes (Appendix A). Moreover, we created NeuralMidiFx (Appendix B), a wrapper designed to facilitate the deployment of neural networks in VST (Virtual Studio Technology) format. This tool was developed to overcome the technical challenges encountered during the real-time deployment of the generative models. Furthermore, two novel datasets, TapTamDrum (Appendix C) and El Bongosero (Appendix D), were created as part of this research. These datasets serve as valuable resources for future studies on both rhythm generation and rhythm analysis.

BibTeX

@phdthesis{HakiPhDThesis, title = {{Design, Development, and Deployment of Real-Time Drum Accompaniment Systems}}, author = {Haki, Behzad}, year = {2024}, month = dec, address = {Barcelona, Spain}, school = {Universitat Pompeu Fabra}, }

We just had a live show at Palau Guell in Barcelona, Spain. Here is a blog post on the MTG website about this performance.

To learn more about this project, please check out the following publication:

Repurposing a Rhythm Accompaniment System for Pipe Organ Performance

Nicholas Evans*, Behzad Haki*, Sergi Jordà* Equal contribution

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) · 2025Abstract

This paper presents an overview of a human-machine collaborative musical performance by Raül Refree utilizing multiple MIDI-enabled pipe organs at Palau Güell, as part of the Organic concert series. Our earlier collaboration focused on live performances using drum generation systems, where generative models captured rhythmic transient structures while ignoring harmonic information. For the organ performance, we required a system capable of generating harmonic sequences in real-time, conditioned on Refree’s performance. Instead of developing a comprehensive state-of-the-art model, we integrated a more traditional generative method to convert our pitch-agnostic rhythmic patterns into harmonic sequences. This paper details the development process, the creative and technical considerations behind the final performance, and a reflection on the efficacy and adaptability of the chosen methodology.

BibTeX

@inproceedings{nime2025_16, address = {Canberra, Australia }, articleno = {16}, author = {Evans, Nicholas and Haki, Behzad and Jordà, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME)}, doi = {10.5281/zenodo.15698807}, issn = {2220-4806}, month = jun, numpages = {5}, pages = {116--120}, title = {{Repurposing a Rhythm Accompaniment System for Pipe Organ Performance}}, track = {Paper}, year = {2025}, }

We will be presenting the Elbongosero dataset at ISMIR 2024.

El Bongosero: A Crowd-sourced Symbolic Dataset of Improvised Hand Percussion Rhythms Paired with Drum Patterns

Nicholas Evans*, Behzad Haki*, Daniel Gomez, and 1 more* Equal contribution

Proceedings of the 24th International Society for Music Information Retrieval Conference · 2024Abstract

We present El Bongosero, a large-scale, open-source symbolic dataset comprising expressive, improvised drum performances crowd-sourced from a pool of individuals with varying levels of musical expertise. Originating from an interactive installation hosted at Centre de Cultura Contemporània de Barcelona, our dataset consists of 6,035 unique tapped sequences performed by 3,184 participants. To our knowledge, this is the only symbolic dataset of its size and type that includes expressive timing and dynamics information as well as each participant’s level of expertise. These unique characteristics could prove to be valuable to future research, particularly in the areas of music generation and music education. Preliminary analysis, including a step-wise Jaccard similarity analysis on a subset of the data, demonstrate that this dataset is a diverse, nonrandom, and musically meaningful collection. To facilitate prompt exploration and understanding of the data, we have also prepared a dedicated website and an open-source API in order to interact with the data.

BibTeX

@inproceedings{Haki2024ELBNG, title = {{El Bongosero: A Crowd-sourced Symbolic Dataset of Improvised Hand Percussion Rhythms Paired with Drum Patterns}}, author = {Evans, Nicholas and Haki, Behzad and Gomez, Daniel and Jorda, Sergi}, booktitle = {{Proceedings of the 24th International Society for Music Information Retrieval Conference}}, year = {2024}, month = nov, publisher = {ISMIR}, }

Moreover, we will be presenting the following paper at ISMIR 2024 Late-Breaking Demo Session.

Groove Transfer VST for Latin American Rhythms

Anmol Mishra, Behzad Haki, Satyajeet Prabhu, and 1 morePresented at 25th International Society for Music Information Retrieval Conference (ISMIR) · 2024Abstract

Latin American music relies on groove—small variations in timing, dynamics, and other aspects—to create an expressive rhythmic feel in music performance. Electronic music production often attempts to replicate these qualities through algorithmic manipulations to achieve similar effects. In this work, we employ a transformer-based model to learn microtiming and dynamics from onset timing and strength annotations of Uruguayan Candombe drum performances. The model is then deployed as a VST allowing users to apply the learnt candombe microrhythms to quantized midi drum performances. With this work, we aim to bridge the gap between algorithmic rhythm creation and the expressive qualities of live performance, striving to produce music with the authentic grooves of various Latin American genres.

BibTeX

@inproceedings{Mishra2024LBD, author = {Mishra, Anmol and Haki, Behzad and Prabhu, Satyajeet and Rocamora, Martín}, booktitle = {{Presented at 25th International Society for Music Information Retrieval Conference (ISMIR)}}, year = {2024}, month = nov, publisher = {ISMIR}, title = {{Groove Transfer VST for Latin American Rhythms}}, }

TapTamDrum Dataset Presentation at ISMIR 2023

I will be presenting the TapTamDrum dataset at ISMIR 2023.

To read more about the dataset, please visit the TapTamDrum website or the paper here.

TapTamDrum: A Dataset for Dualized Drum Patterns

Behzad Haki, Błażej Kotowski, Cheuk Lee, and 1 moreProceedings of the 24th International Society for Music Information Retrieval Conference · 2023Abstract

Drummers spend extensive time practicing rudiments to develop technique, speed, coordination, and phrasing. These rudiments are often practiced on "silent" practice pads using only the hands. Additionally, many percussive instruments across cultures are played exclusively with the hands. Building on these concepts and inspired by Einstein’s probably apocryphal quote, "Make everything as simple as possible, but not simpler," we hypothesize that a dual-voice reduction could serve as a natural and meaningful compressed representation of multi-voiced drum patterns. This representation would retain more information than its corresponding monotonic representation while maintaining relative simplicity for tasks such as rhythm analysis and generation. To validate this potential representation, we investigate whether experienced drummers can consistently represent and reproduce the rhythmic essence of a given drum pattern using only their two hands. We present TapTamDrum: a novel dataset of repeated dualizations from four experienced drummers, along with preliminary analysis and tools for further exploration of the data.

BibTeX

@inproceedings{Haki2023TapTamDrum, title = {{TapTamDrum: A Dataset for Dualized Drum Patterns}}, author = {Haki, Behzad and Kotowski, Błażej and Lee, Cheuk and Jorda, Sergi}, booktitle = {{Proceedings of the 24th International Society for Music Information Retrieval Conference}}, year = {2023}, month = nov, publisher = {ISMIR}, }

My colleague Nicholas Evans, our supervisor, Sergi Jordà, and I have prepared an interactive installation for the CCCB’s exhibition “AI: Artificial Intelligence” in Barcelona.

The installation is called “El Bongosero”. If you are in Barcelona, please visit the exhibition and check it out!

The exhibition will be open from October 18, 2023, to March 17, 2024.

For more information about the exhibition, please visit the CCCB’s website.

To learn more about the installation, please check out the following publication:

El Bongosero: A Crowd-sourced Symbolic Dataset of Improvised Hand Percussion Rhythms Paired with Drum Patterns

Nicholas Evans*, Behzad Haki*, Daniel Gomez, and 1 more* Equal contribution

Proceedings of the 24th International Society for Music Information Retrieval Conference · 2024Abstract

We present El Bongosero, a large-scale, open-source symbolic dataset comprising expressive, improvised drum performances crowd-sourced from a pool of individuals with varying levels of musical expertise. Originating from an interactive installation hosted at Centre de Cultura Contemporània de Barcelona, our dataset consists of 6,035 unique tapped sequences performed by 3,184 participants. To our knowledge, this is the only symbolic dataset of its size and type that includes expressive timing and dynamics information as well as each participant’s level of expertise. These unique characteristics could prove to be valuable to future research, particularly in the areas of music generation and music education. Preliminary analysis, including a step-wise Jaccard similarity analysis on a subset of the data, demonstrate that this dataset is a diverse, nonrandom, and musically meaningful collection. To facilitate prompt exploration and understanding of the data, we have also prepared a dedicated website and an open-source API in order to interact with the data.

BibTeX

@inproceedings{Haki2024ELBNG, title = {{El Bongosero: A Crowd-sourced Symbolic Dataset of Improvised Hand Percussion Rhythms Paired with Drum Patterns}}, author = {Evans, Nicholas and Haki, Behzad and Gomez, Daniel and Jorda, Sergi}, booktitle = {{Proceedings of the 24th International Society for Music Information Retrieval Conference}}, year = {2024}, month = nov, publisher = {ISMIR}, }

I will be having a workshop for the following paper at AIMC 2023. If you are interested in attending, please register here.

NeuralMidiFx: A Wrapper Template for Deploying Neural Networks as VST3 Plugins

Behzad Haki, Julian Lenz, Sergi JordaProceedings of the 4th International Conference on on AI and Musical Creativity · 2023Abstract

Proper research, development and evaluation of AI-based generative systems of music that focus on performance or composition require active user-system interactions. To include a diverse group of users that can properly engage with a given system, researchers should provide easy access to their developed systems. Given that many users (i.e. musicians) are non-technical to the field of AI and the development frameworks involved, the researchers should aim to make their systems accessible within the environments commonly used in production/composition workflows (e.g. in the form of plugins hosted in digital audio workstations). Unfortunately, deploying generative systems in this manner is highly expensive. As such, researchers with limited resources are often unable to provide easy access to their works, and subsequently, are not able to properly evaluate and encourage active engagement with their systems. Facing these limitations, we have been working on a solution that allows for easy, effective and accessible deployment of generative systems. To this end, we propose a wrapper/template called NeuralMidiFx, which streamlines the deployment of neural network based symbolic music generation systems as VST3 plugins. The proposed wrapper is intended to allow researchers to develop plugins with ease while requiring minimal familiarity with plugin development.

BibTeX

@inproceedings{Haki2023NeuralMidiFx, author = {Haki, Behzad and Lenz, Julian and Jorda, Sergi}, booktitle = {{Proceedings of the 4th International Conference on on AI and Musical Creativity}}, publisher = {}, title = {{NeuralMidiFx: A Wrapper Template for Deploying Neural Networks as VST3 Plugins}}, year = {2023}, month = sep, }

My colleague, Nick Evans, will be performing with the GrooveTransformer Eurorack Module at RAIN+ festival.

Moreover, we will be having a booth at SONAR+D 2023 to showcase the GrooveTransformer Eurorack Module.

This is a module that Nick and I have been working on for the past year. It is a Eurorack module that uses the GrooveTransformer model to generate drum accompaniment in real-time.

You can find more information about this project through the following publication:

GrooveTransformer: A Generative Drum Sequencer Eurorack Module

Nicholas Evans*, Behzad Haki*, Sergi Jorda* Equal contribution

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) · 2024Abstract

This paper presents the GrooveTransformer, a Eurorack module designed for generative drum sequencing. Central to its design is a Variational Auto-Encoder (VAE), around which we have designed a deployment context enabling performance through accompaniment and/or user interaction. This module allows the user to use the system as an accompaniment generator while interacting with the generative processes in real-time. In this paper, we review the design principles and technical architecture of the module, while also discussing the potentials and short-comings of our work.

BibTeX

@inproceedings{Haki2024GrooveTransformer, author = {Evans, Nicholas and Haki, Behzad and Jorda, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME)}, year = {2024}, month = sep, publisher = {NIME}, title = {{GrooveTransformer: A Generative Drum Sequencer Eurorack Module}}, }

We just presented the following paper at NIME 2023.

Completing Audio Drum Loops with Symbolic Drum Suggestions

Behzad Haki*, Teresa Pelinski*, Marina Nieto, and 1 more* Equal contribution

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) · 2023Abstract

Sampled drums can be used as an affordable way of creating human-like drum tracks, or perhaps more interestingly, can be used as a mean of experimentation with rhythm and groove. Similarly, AI-based drum generation tools can focus on creating human-like drum patterns, or alternatively, focus on providing producers/musicians with means of experimentation with rhythm. In this work, we aimed to explore the latter approach. To this end, we present a suite of Transformer-based models aimed at completing audio drum loops with stylistically consistent symbolic drum events. Our proposed models rely on a reduced spectral representation of the drum loop, striking a balance between a raw audio recording and an exact symbolic transcription. Using a number of objective evaluations, we explore the validity of our approach and identify several challenges that need to be further studied in future iterations of this work. Lastly, we provide a real-time VST plugin that allows musicians/producers to utilize the models in real-time production settings.

BibTeX

@inproceedings{Haki2023Completing, author = {Haki, Behzad and Pelinski, Teresa and Nieto, Marina and Jorda, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME)}, year = {2023}, month = apr, publisher = {NIME}, title = {{Completing Audio Drum Loops with Symbolic Drum Suggestions}}, }

We just presented the following paper at AIMC 2022.

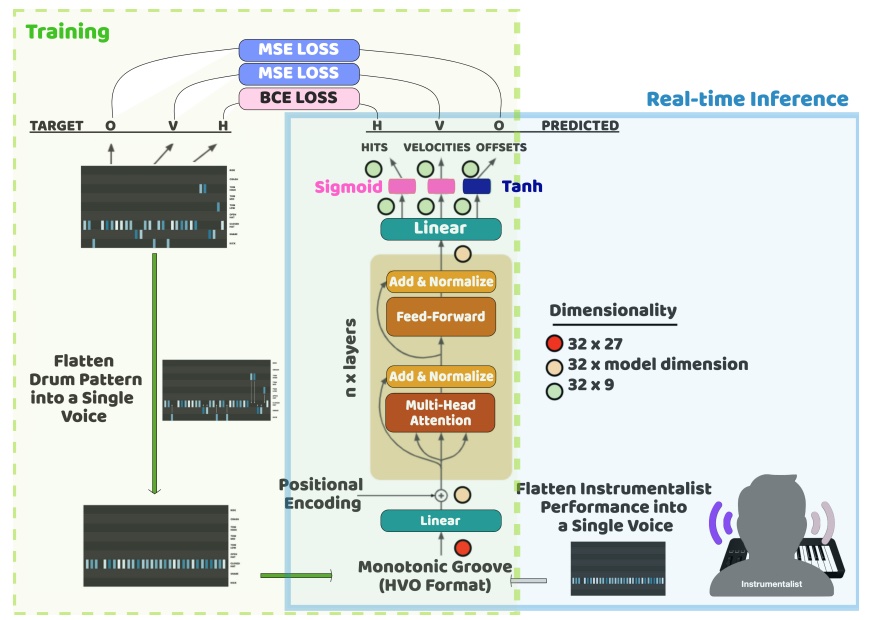

Real-Time Drum Accompaniment Using Transformer Architecture

Behzad Haki*, Marina Nieto*, Teresa Pelinski, and 1 more* Equal contribution

Proceedings of the 3rd International Conference on on AI and Musical Creativity · 2022Abstract

This paper presents a real-time drum generation system capable of accompanying a human instrumentalist. The drum generation model is a transformer encoder trained to predict a short drum pattern given a reduced rhythmic representation. We demonstrate that with certain design considerations, the short drum pattern generator can be used as a real-time accompaniment in musical sessions lasting much longer than the duration of the training samples. A discussion on the potentials, limitations and possible future continuations of this work is provided.

BibTeX

@inproceedings{haki_behzad_2022_7088343, author = {Haki, Behzad and Nieto, Marina and Pelinski, Teresa and Jordà, Sergi}, title = {{Real-Time Drum Accompaniment Using Transformer Architecture}}, booktitle = {{Proceedings of the 3rd International Conference on on AI and Musical Creativity}}, year = {2022}, publisher = {AIMC}, month = sep, doi = {10.5281/zenodo.7088343}, url = {https://doi.org/10.5281/zenodo.7088343}, }