Behzad Haki

PhD · Researcher & Developer · Music Technology · Barcelona

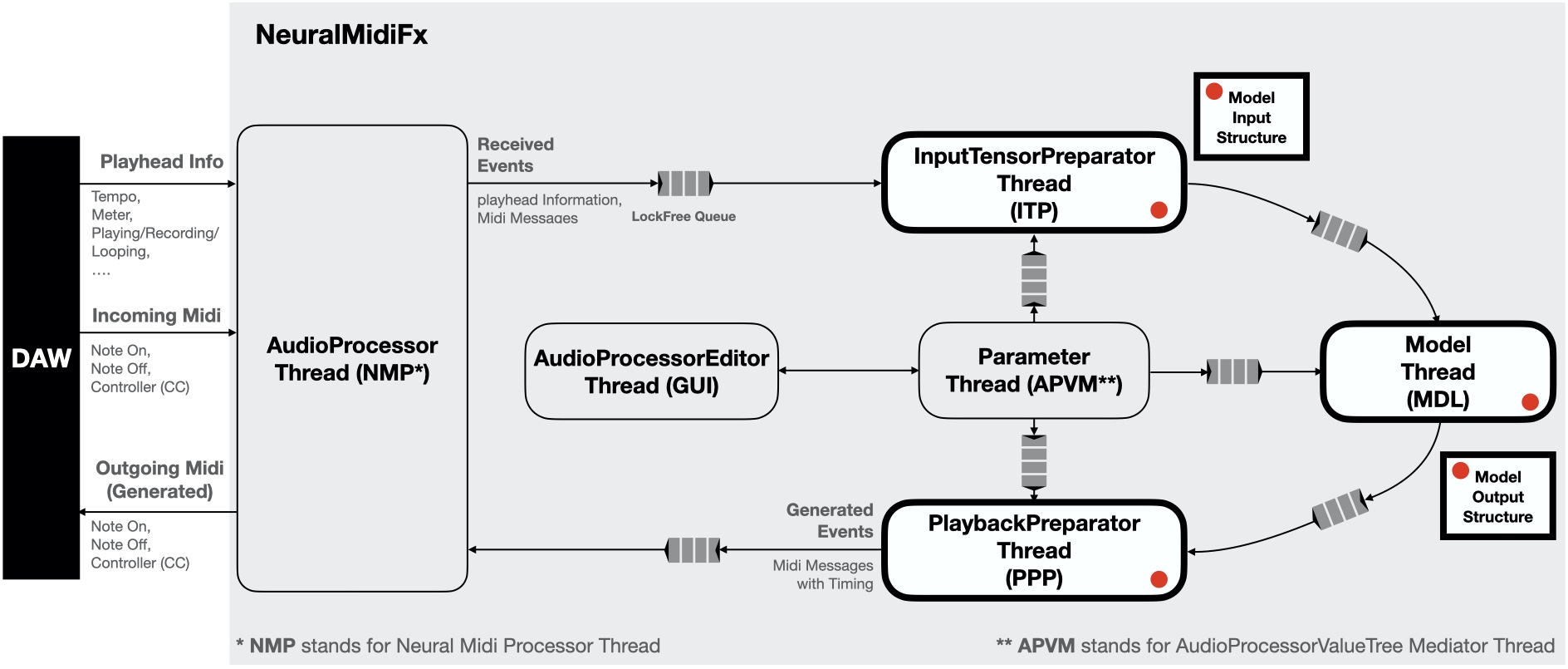

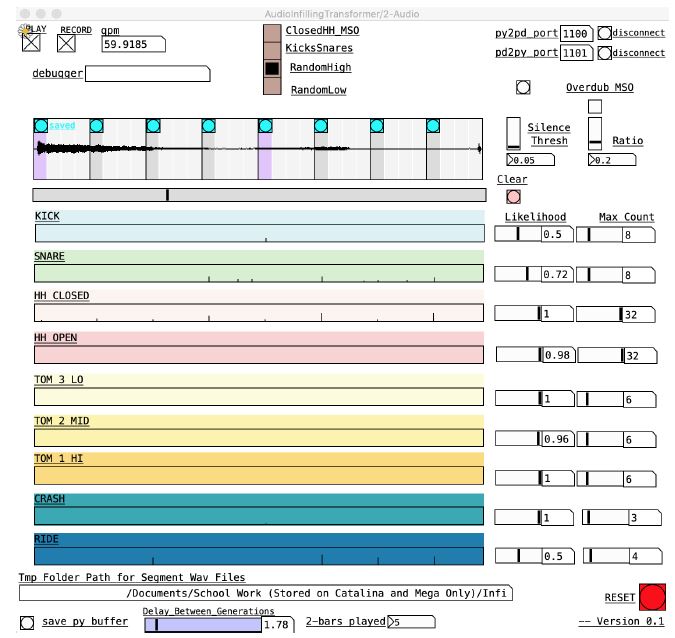

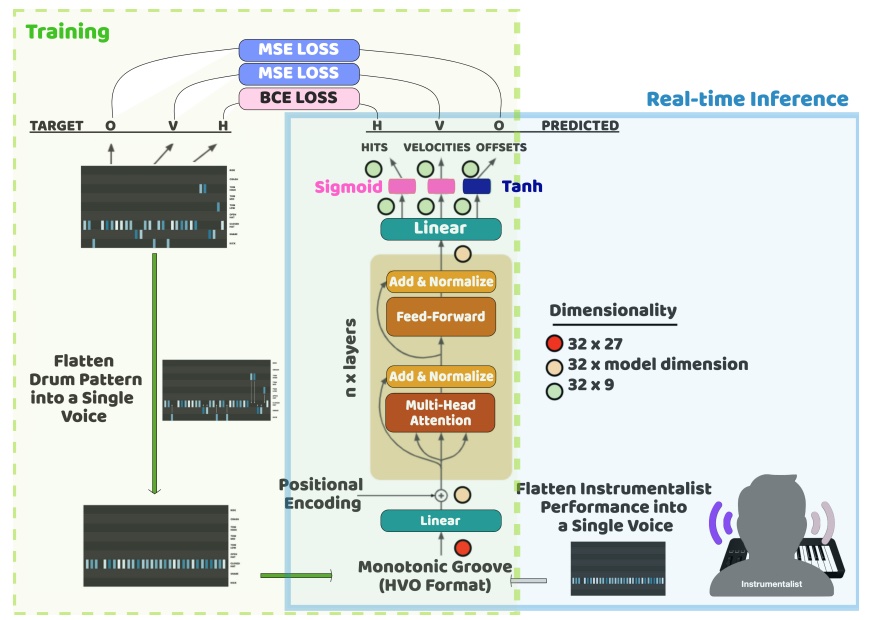

I recently completed my PhD at the Music Technology Group (MTG), Universitat Pompeu Fabra, where my research centered on interactive real-time generative systems for music. Specifically, I worked on designing and training lightweight neural networks for real-time music generation, and on deploying them as practical digital instruments — ranging from VST plugins to embedded hardware systems.

Alongside my research, I have hands-on development experience building audio software and tools, including open-source VST/AUv2 plugins, Max4Live devices, and hardware prototypes. My academic background spans signal processing, machine learning, music acoustics, and sound and music computing — through a BASc in Electrical Engineering (with a focus on DSP and acoustics) from the University of British Columbia and a Master's in Sound and Music Computing from UPF.

Beyond academia, I have also worked as an acoustic engineering consultant, applying my background in acoustics and signal processing to real-world projects.

📍 Barcelona, Spain

✉️ behzad.haki@upf.edu

Read More

I’ve been working on a new fun side project called FreesoundRack. It’s a sampler plugin that allows you to easily load and play sounds from Freesound.org. The idea is to be able to quickly curate racks of sounds from Freesound and use them in your music production workflow.

The plugin is still in early development, but for those interested, an early version is available here:

Read More

Throughout my research in the past few years, I have developed many tools. Given time restrictions, these were all available in source code form, hence for some, it was not easy to use them. I am happy to announce that all tools are now easily accessible and ready to use. I’ve worked extensively on packaging all these tools and preparing documentation/demos as much as possible.

To access these refer to the Projects page, where you can find links to all the tools I have developed.

Read More

We just presented the following paper at AIMC 2025.

Exploring Situated Stabilities of a Rhythm Generation System Through Variational Cross-Examination

· 2025Abstract

This paper investigates GrooveTransformer, a real-time rhythm generation system, through the postphenomenological framework of Variational Cross-Examination (VCE). By reflecting on its deployment across three distinct artistic contexts, we identify three stabilities: an autonomous drum accompaniment generator, a rhythmic control voltage sequencer in Eurorack format, and a rhythm driver for a harmonic accompaniment system. The versatility of its applications was not an explicit goal from the outset of the project. Thus, we ask: how did this multistability emerge? Through VCE, we identify three key contributors to its emergence: the affordances of system invariants, the interdisciplinary collaboration, and the situated nature of its development. We conclude by reflecting on the viability of VCE as a descriptive and analytical method for Digital Musical Instrument (DMI) design, emphasizing its value in uncovering how technologies mediate, co-shape, and are co-shaped by users and contexts.

BibTeX

@misc{kotowski_2025_16946740, author = {Kotowski, Błażej and Evans, Nicholas and Haki, Behzad and Font, Frederic and Jordà, Sergi}, title = {{Exploring Situated Stabilities of a Rhythm Generation System Through Variational Cross-Examination}}, month = sep, year = {2025}, doi = {10.5281/zenodo.16946740}, url = {https://doi.org/10.5281/zenodo.16946740}, address = {Brussels, Belgium}, }

Read More

The following paper was just presented at ICMC 2025:

Learning Microrhythm in Uruguayan Candombe using Transformers

Proceedings of the International Computer Music Conference (ICMC) · 2025Abstract

Musicians rely on nuanced microrhythm, slight variations in timing, dynamics, and other aspects, to create an expressive rhythmic feel in music performance. Electronic music production often attempts to replicate these qualities through algorithmic manipulations to achieve similar effects. In this work, we address the generation of microrhythm using a method that learns microtiming and dynamics from onset timing and strength annotations of drum performances. We frame microrhythm learning as a sequence modeling task, leveraging a Transformer-based model. Our focus is on Uruguayan candombe drumming, where we explore its rhythmic patterns at both the beat and rhythmic cycle levels. To evaluate the model’s effectiveness in replicating the original microrhythm, we compare the mean, standard deviation, and histogram intersection of timing deviations and dynamics values at each subdivision for the original and the generated data. The model is deployed as a VST enabling artists to incorporate candombe grooves into drum scores. With this work, we aim to bridge the gap between algorithmic rhythm creation and the expressive qualities of live performance, striving to produce music with the authentic grooves of various Latin American genres.

BibTeX

@inproceedings{mishra2025learning, title = {{Learning Microrhythm in Uruguayan Candombe using Transformers}}, author = {Mishra, Anmol and Prabhu, Satyajeet and Haki, Behzad and Rocamora, Mart{\'\i}n}, year = {2025}, month = jun, booktitle = {{Proceedings of the International Computer Music Conference (ICMC)}}, address = {Boston, Massachusetts}, }

Read More

The following paper was just presented at NIME 2025:

Repurposing a Rhythm Accompaniment System for Pipe Organ Performance

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) · 2025Abstract

This paper presents an overview of a human-machine collaborative musical performance by Raül Refree utilizing multiple MIDI-enabled pipe organs at Palau Güell, as part of the Organic concert series. Our earlier collaboration focused on live performances using drum generation systems, where generative models captured rhythmic transient structures while ignoring harmonic information. For the organ performance, we required a system capable of generating harmonic sequences in real-time, conditioned on Refree’s performance. Instead of developing a comprehensive state-of-the-art model, we integrated a more traditional generative method to convert our pitch-agnostic rhythmic patterns into harmonic sequences. This paper details the development process, the creative and technical considerations behind the final performance, and a reflection on the efficacy and adaptability of the chosen methodology.

BibTeX

@inproceedings{nime2025_16, address = {Canberra, Australia }, articleno = {16}, author = {Evans, Nicholas and Haki, Behzad and Jordà, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME)}, doi = {10.5281/zenodo.15698807}, issn = {2220-4806}, month = jun, numpages = {5}, pages = {116--120}, title = {{Repurposing a Rhythm Accompaniment System for Pipe Organ Performance}}, track = {Paper}, year = {2025}, }