Access

If you are interested in trying out the module, there is a software version of it available here

License

Copyright (c) Music Technology Group, 2023

Permission is hereby granted, free of charge, to any person obtaining a copy of this hardware design and associated documentation files (the “Design”), to deal in the Design without restriction, including without limitation the rights to use, copy, modify, merge, publish, and distribute copies of the Design, subject to the following conditions:

THE DESIGN MAY NOT BE USED FOR COMMERCIAL PURPOSES.

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Design.

Publication

GrooveTransformer: A Generative Drum Sequencer Eurorack Module

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) 2024 · 2024Abstract

This paper presents the GrooveTransformer, a Eurorack module designed for generative drum sequencing. Central to its design is a Variational Auto-Encoder (VAE), around which we have designed a deployment context enabling performance through accompaniment and/or user interaction. This module allows the user to use the system as an accompaniment generator while interacting with the generative processes in real-time. In this paper, we review the design principles and technical architecture of the module, while also discussing the potentials and short-comings of our work.

BibTeX

@inproceedings{Haki2024GrooveTransformer, author = {Evans, Nicholas and Haki, Behzad and Jorda, Sergi}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression (NIME) 2024}, year = {2024}, month = sep, publisher = {NIME}, title = {{GrooveTransformer: A Generative Drum Sequencer Eurorack Module}}, }

Design, development, and deployment of real-time drum accompaniment systems

· 2024Abstract

This dissertation examines the generation of real-time symbolic drum accompaniments, with a particular focus on live improvisation contexts. While the research does occasionally focus on the audio domain, the majority of the research is centered on symbolic-to-symbolic systems. This dissertation addresses real-time drum accompaniment from multiple perspectives: (1) conceptual, where a target application is designed based on a set of specified requirements, (2) architectural, where specific generative models are designed and developed for the selected conceptual design, and (3) deployment, where the conceptual design is realized and evaluated. Throughout this work, three accompaniment systems were developed and refined. The first work, detailed in Chapters 3 and 4, was aimed to develop a lightweight system on which future more sophisticated designs could be based. This system was based on a transformer model that was developed to convert a monotonic (single voice) rhythmic loop (groove) into a full multi-voice drum loop. The concept explored here was to investigate whether a loopbased system could be effectively used for generating drum accompaniments in long evolving improvisational sessions. The resulting system was evaluated by professional musician Raül Refree, who provided valuable insights on how the design could be modified to better suit the task. Following these evaluations, the second system, GrooveTransformer, was developed (discussed in Chapter 5). In this work, rather than relying on our personal speculations, we collaborated with Refree from the outset of the project. As such, we were able to develop a system that was far more suitable for the task at hand, to the extent that the musician felt comfortable to perform with the system in a public live improvisational session. While still loop-based, the generative model in this work was based on a variational transformer that enabled us to address the majority of the collaborating musician’s requirements for the system. Although initially deployed as software, we also developed a hardware Eurorack version (discussed in Chapter 6). The Eurorack module was designed to encourage experimentation and exploration beyond the system’s original intent. In the third system (discussed in Chapter 7), we moved beyond the loop-based approach. The primary goal was to enhance the system’s awareness of the evolving performance over extended durations. To this end, we developed a new generative model with a much larger context. The larger model’s computational demands required a thorough exploration of both conceptual and technical deployment strategies. All of these systems focused on converting a monotonic groove into a multivoice drum pattern. In Chapter 8, we first discuss the limitations and affordances of basing the generations solely on groove. Additionally, several works and proposals surrounding this groove-to-drum approach are discussed in detail: (1) how to improve the process of extracting grooves from polyphonic sources, (2) how to make this approach more accommodating for individuals with varying levels of musical experience, (3) how to expand the concept to generate general rhythms rather than exclusively drums, and (4) how to extract groove from audio sources. Beyond the primary objectives, this research also yielded several significant secondary contributions that arose from the explorations conducted. One such achievement was that we were able to establish that our systems can also be adapted to work with audios without major architectural changes (Appendix A). Moreover, we created NeuralMidiFx (Appendix B), a wrapper designed to facilitate the deployment of neural networks in VST (Virtual Studio Technology) format. This tool was developed to overcome the technical challenges encountered during the real-time deployment of the generative models. Furthermore, two novel datasets, TapTamDrum (Appendix C) and El Bongosero (Appendix D), were created as part of this research. These datasets serve as valuable resources for future studies on both rhythm generation and rhythm analysis.

BibTeX

@phdthesis{HakiPhDThesis, title = {Design, development, and deployment of real-time drum accompaniment systems}, author = {Haki, Behzad}, year = {2024}, month = dec, address = {Barcelona, Spain}, school = {Music Technology Group, Universitat Pompeu Fabra}, type = {PhD thesis}, }

Overview

Source Code

Daisy Seed Source (C++)

Libre (or RPi) Code (Python)

Additional Info

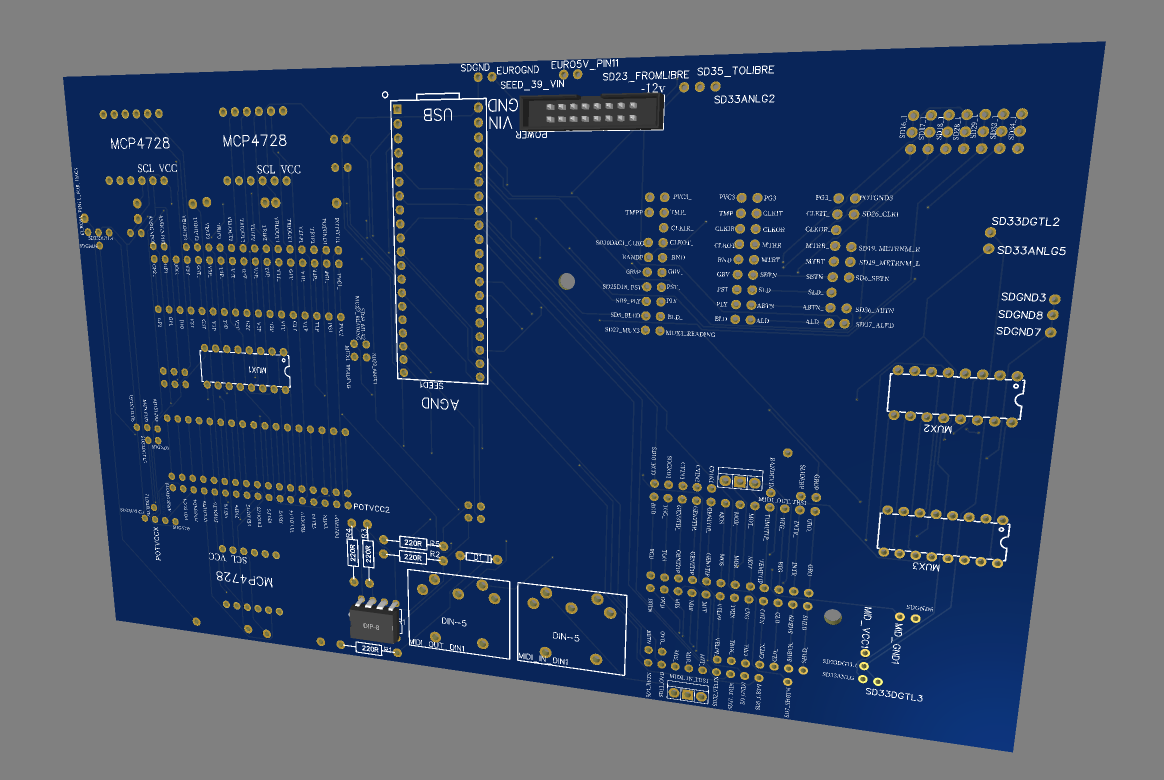

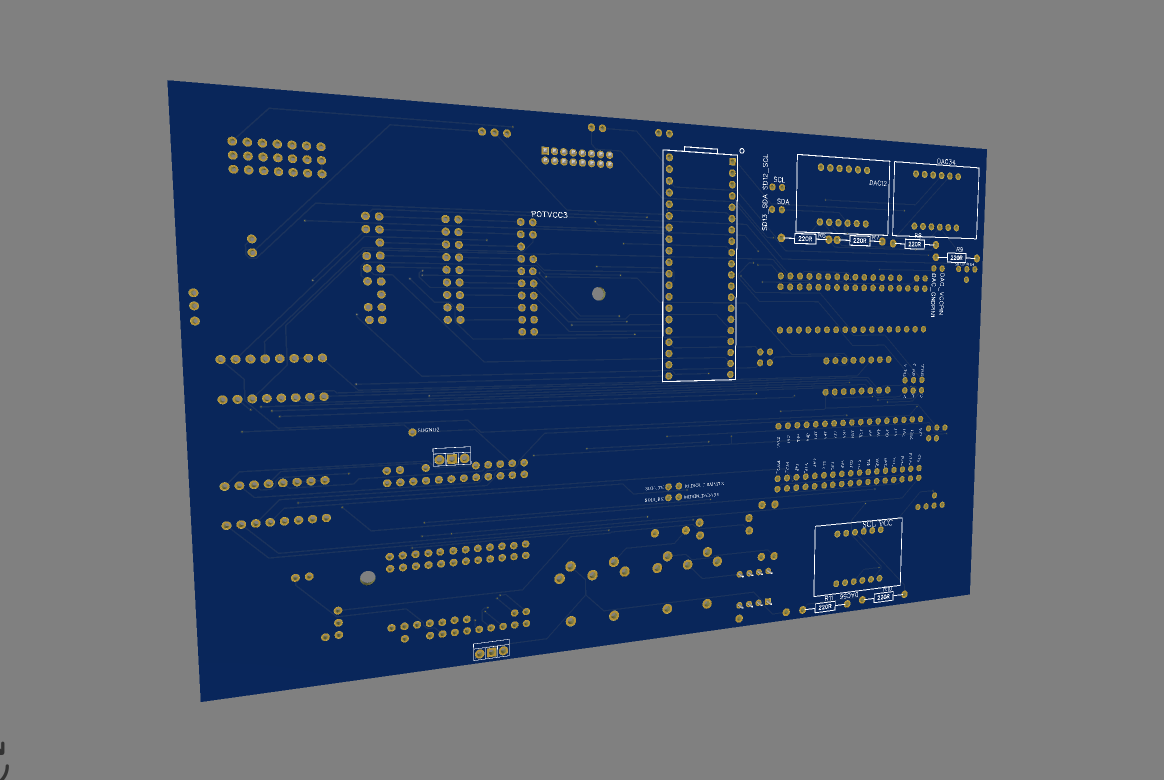

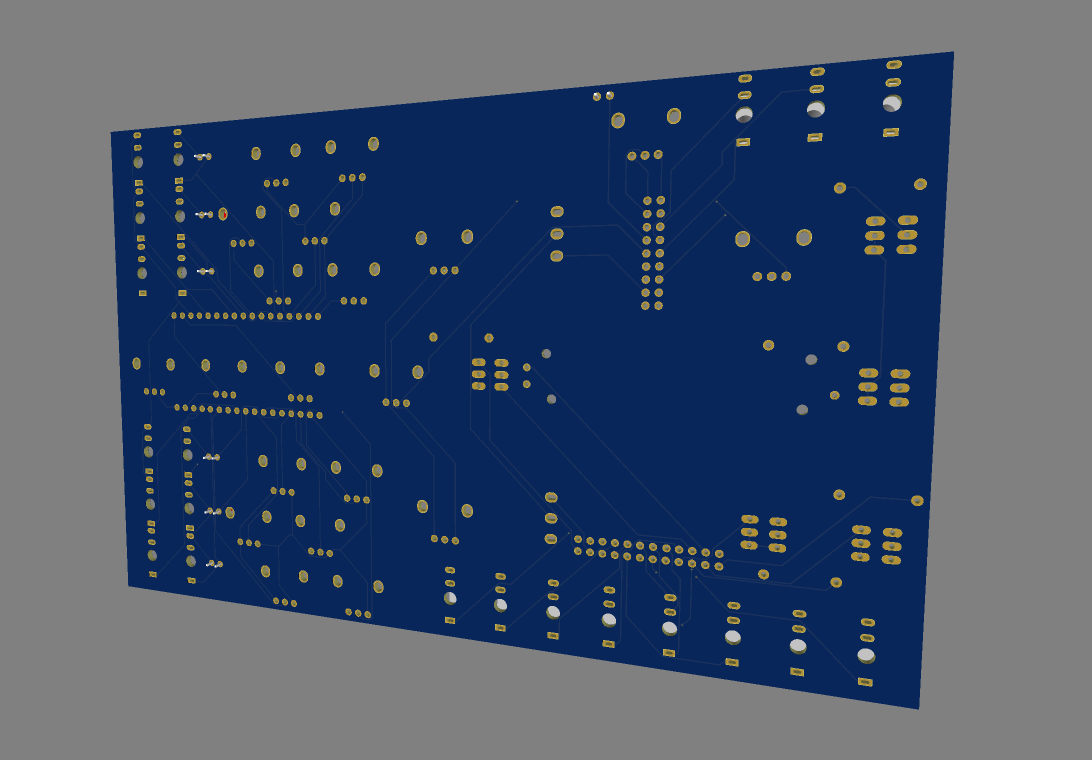

PCB

Schematics

Gerber Files

JLCPCB Production Files

Images

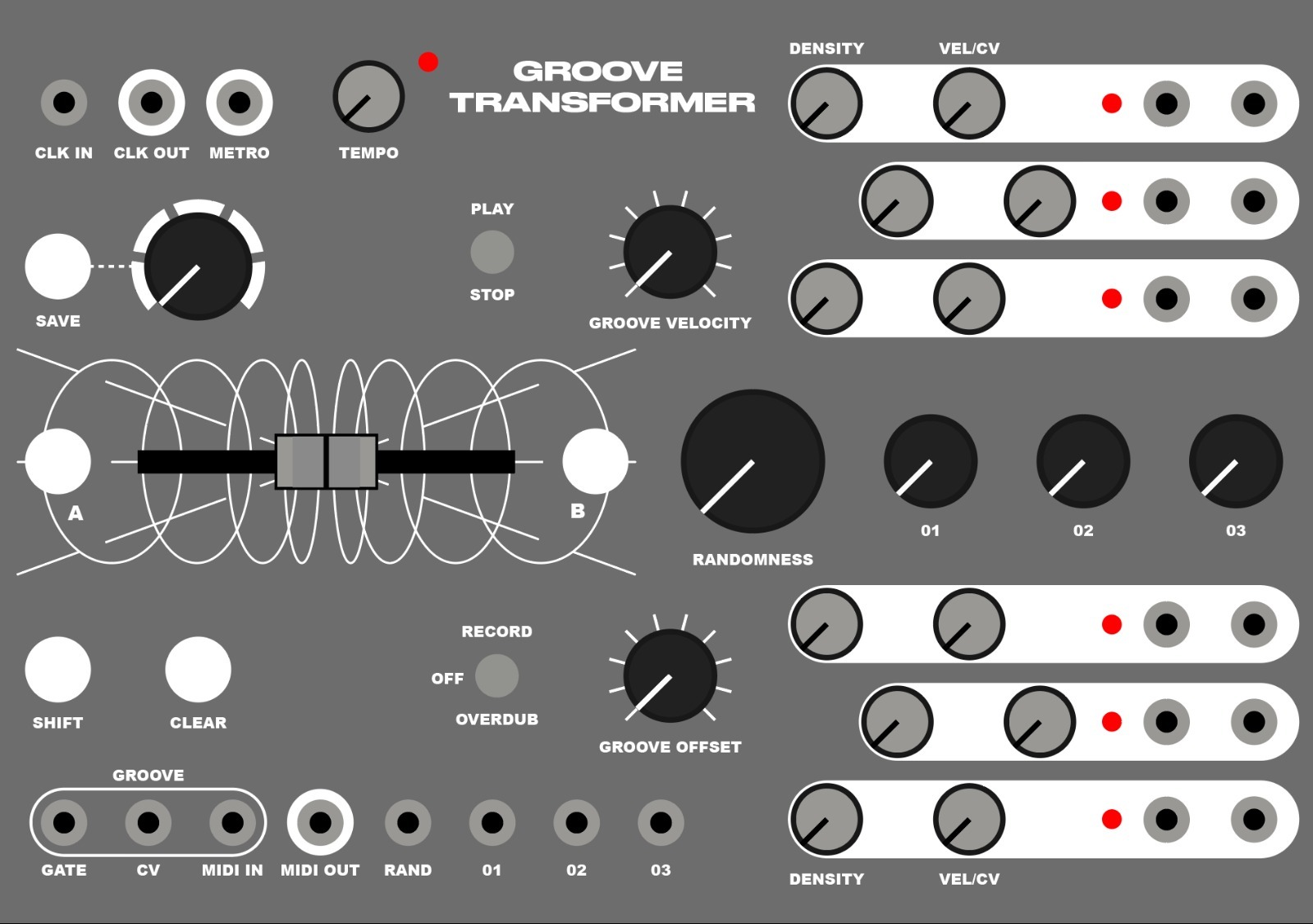

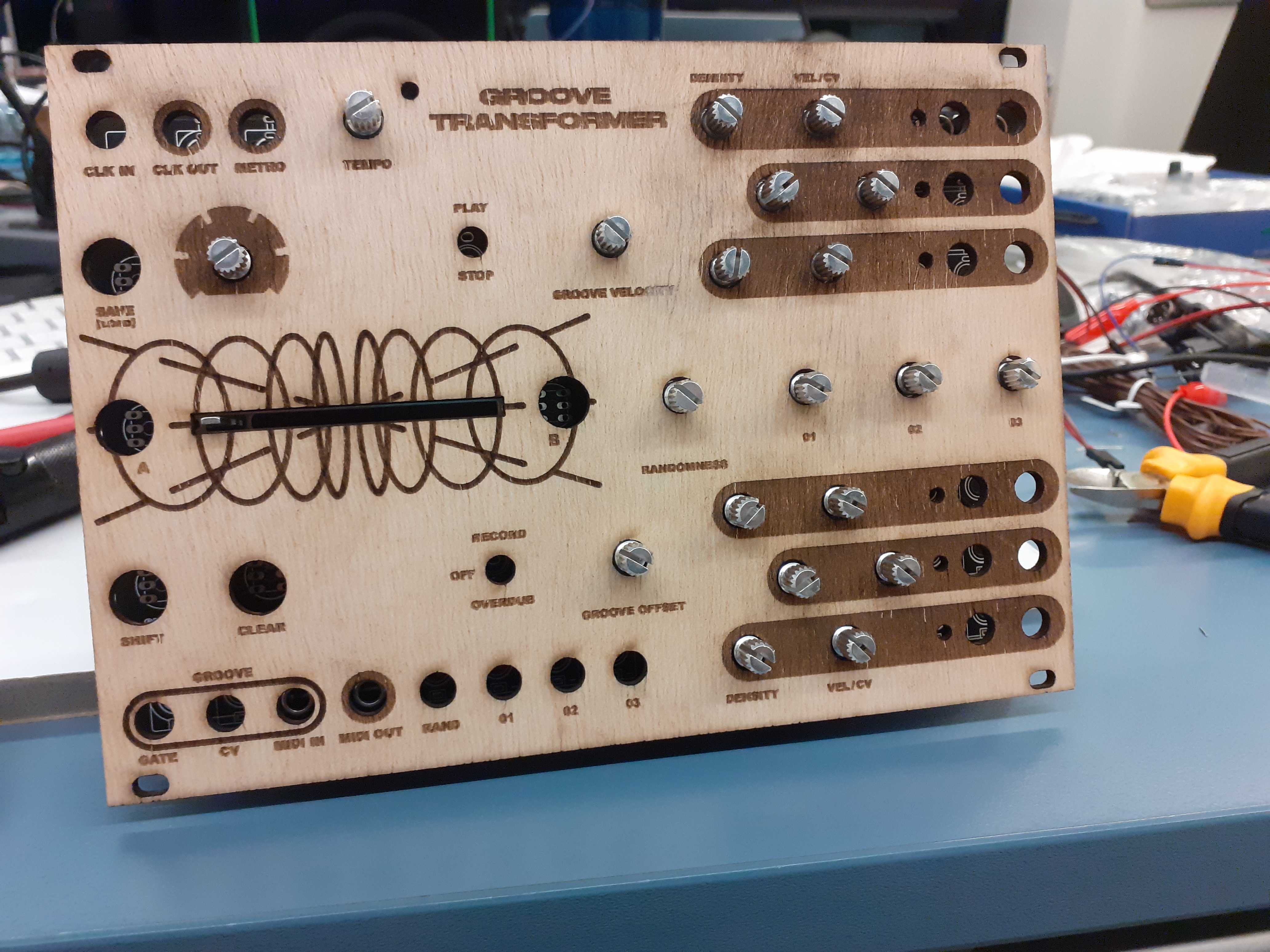

Faceplate

Design

Different Finishes

No Faceplate:

Laser Cut and Laser Etched Wood:

Glass:

Glass with Printed Sticker:

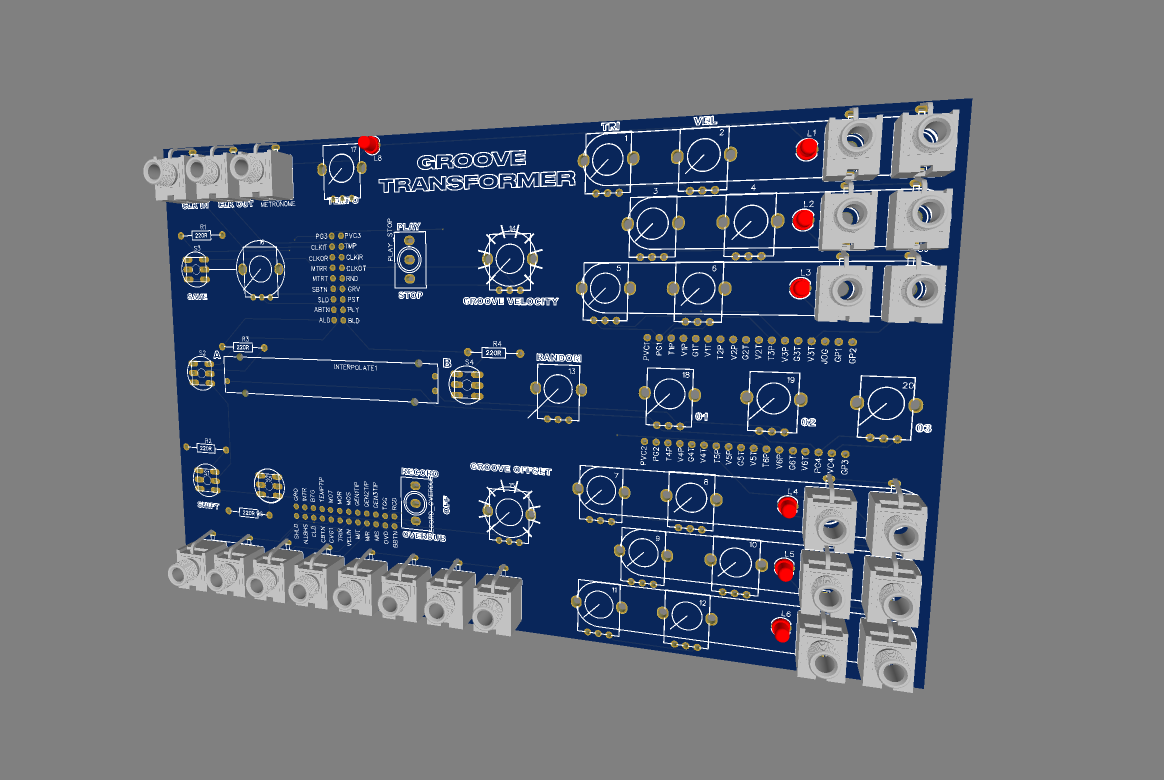

Interface Controls and Parameters

| Figure Index | Name | Description | |

|---|---|---|---|

| Utility Parameters | 1 | Internal Clock Tempo Knob | Sets the internal clock tempo |

| 2 | Internal Clock CV Output | CV clock output | |

| 3 | External Clock CV Input | Input for external CV clock source | |

| 4 | Metronome Click Output | Metronome click audio output | |

| 5 | Play/Stop Switch | Starts and stops the outputs and input groove record buffer | |

| 6 | Record/Overdub/Off Switch | Three-way switch to change the mode of the input groove record buffer | |

| 7 | Clear Button | Clears the input groove record buffer | |

| 8 | Shift Button | Enables secondary functions of other buttons | |

| CV/MIDI Pattern Generation Output | 9 | CV Gate Voice Output | MIDI output and CV gate and velocity output for each voice |

| 10 | CV Velocity Voice Output | ||

| 11 | MIDI Output | ||

| Input Groove Control | 12 | Input Groove CV Gate Input | MIDI input and CV gate and velocity input for input groove |

| 13 | Input Groove CV Velocity Input | ||

| 14 | Input Groove MIDI Input | ||

| 15 | Input Groove Velocity Knob | Quantizes the input groove to the grid and quantizes the velocity of each note | |

| 16 | Input Groove Offset Knob | ||

| Generation Control | 17 | Uncertainty Knob | Sets the value of the Uncertainty parameter |

| 18 | Uncertainty CV Input | CV input for the Uncertainty parameter | |

| 19 | Voice Density Knob | Controls the number of hits in each sequence by adjusting the threshold of the model | |

| 20 | Voice Velocity Scale Knob | Scales the velocity output. At minimum value, no scaling is applied to the outputs. At maximum value, all velocities are scaled to the maximum value. | |

| Latent Space Interpolation | 21 | Preset Selection Knob | Selects the preset number to be loaded or to be saved to. A preset consists of the saved states in the latent space Z_A and Z_B |

| 22 | Preset Save/Load Button | Saves current states Z_A and Z_B to the selected preset number. Pressing with shift button loads the preset at the selected preset number | |

| 23 | Save/Randomize A and B Button | Sets the current state to be Z_A or Z_B in the latent space. Pressing with shift button generates a random placement for Z_A or Z_B in the latent space | |

| 24 | A/B Interpolation Slider | Interpolation position ( \alpha ) between states Z_A and Z_B in the latent space | |

| 25 | A/B Interpolation CV Input | CV input to automate the interpolation position ( \alpha ) in the latent space | |

| 26 | Follow Knob | Sets the value of the Follow parameter ( \beta ) in the latent space | |

| 27 | Follow CV Input | CV input to automate the Follow parameter ( \beta ) |

Demo

Eurorack Jams

Setup:

For the GrooveTransformer’s input groove, it receives a multiple of the Intellijel Metropolix gate and pitch sequence that controls the Acid Technology Chainsaw voice in the Eurorack. In this scenario, we have opted to use the pitch values of each note event to represent the velocity of the input. The pitch and gate voltages are sent from the Eurorack to the GrooveTransformer via an Expert Sleepers ES-9 DC-coupled audio interface and a Cardinal CV to MIDI converter plug-in. Generated drum patterns from the GrooveTransformer are converted to Control Voltage (CV) and sent to the Eurorack via a PolyEnd Poly2 MIDI to CV converter.

Drum Synthesis:

We use 7 voices of the generated patterns (kick, snare, open and closed hi-hat, and lo/mid/hi tom) to trigger 4 voices in the Eurorack:

Kick: Schlappi Engineering Angle Grinder + Make Noise Moddemix VCA + Intellijel Quadrax envelope generator

Snare: Intellijel Plonk

Open and Closed Hi-Hats: Basimilus Iteritas Alter

Lo, Mid, Hi Toms: Akemie’s Taiko

To retain generated dynamics, the kick, hi-hats, and toms are routed to individual channels on a Mutable Instruments Veils. The level of each channel is controlled with the velocity sequence associated with the corresponding voice The Intellijel Plonk has a dedicated velocity input that we utilized rather than routing the signal to Veils

Video 1

Video 2

Video 3

Video 4

Module Videos (Exploring Synthesis)

Hardware 1

Hardware 2